Yunong Liu

Research Engineer at Luma AI

I work on multimodal image/video generation and world models, structured visual generation, post-training, reward modeling, and multimodal evaluation.

I lead Layering, a structured visual generation system that turns flat generated images or design media into editable raster, text, vector, and alpha-aware components for multi-turn human and agent editing.

Before Layering, I worked across Luma's image and video generation models, including Ray3 and Uni-1. I built the experimental RL/post-training workflow for Ray3 video generation, including sample generation loops, reward modeling, VLM-as-judge graders, held-out evaluation, and diffusion RL, DPO-style, and GRPO-style experiments. I also contributed RL/data/eval experiments for Uni-1, including OCR-focused rewards, caption/data ablations, and early evaluation.

Before Luma, I completed my Stanford MSCS with Jiajun Wu, where I led IKEA Manuals at Work, a first-author NeurIPS 2024 project on 4D grounding of real-world assembly videos to 3D structure over time. I received my BEng in Electronics and Computer Science from the University of Edinburgh, ranking 2nd in my cohort.

Image/video generation

World models

Structured visual generation

Reward modeling

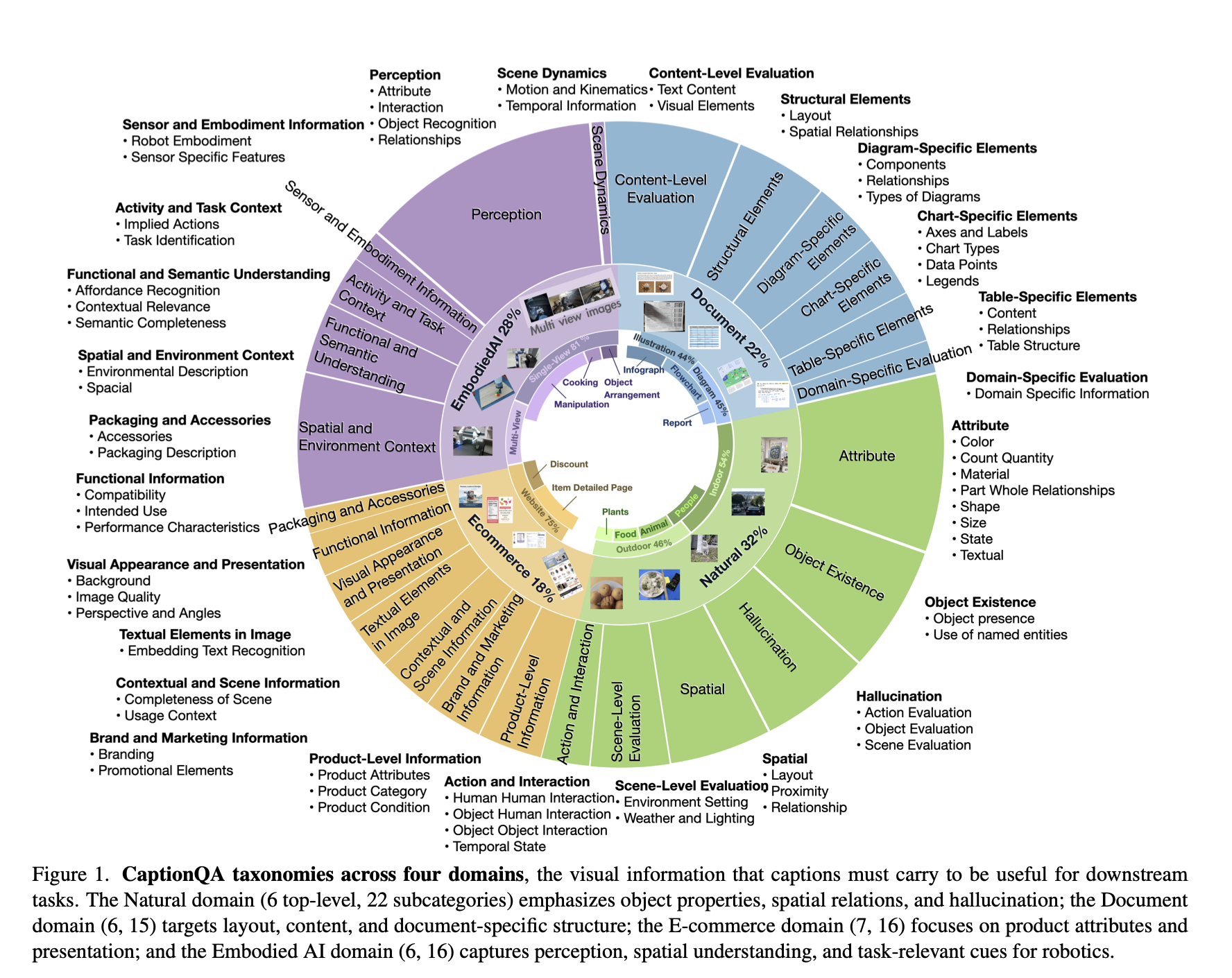

Multimodal evaluation